- All

- Video Editing

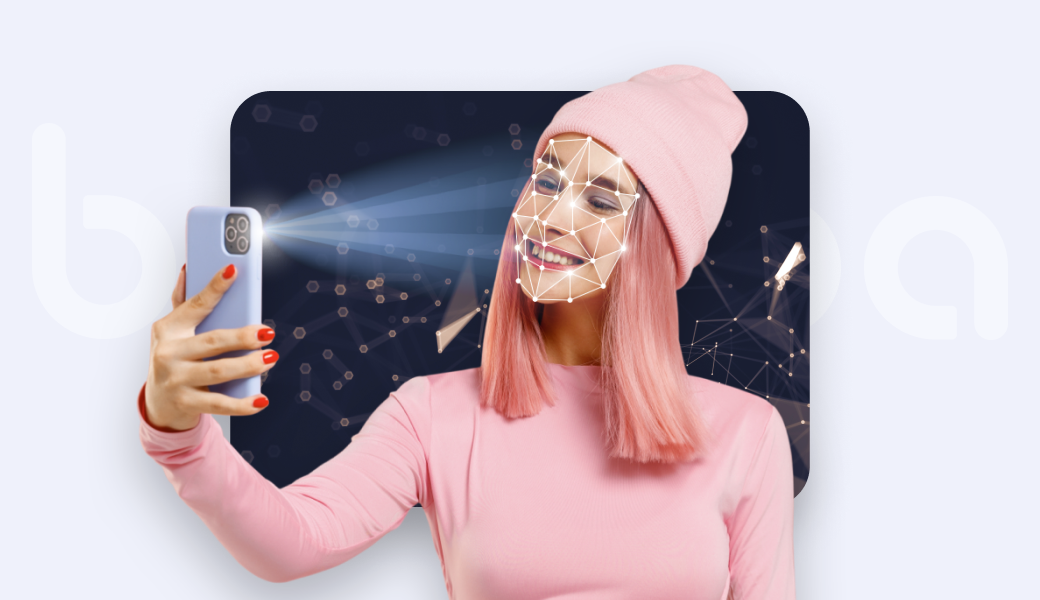

- Face Augmented Reality

- Virtual Try-on

- Face AR SDK Releases

- AR Commerce

- Face Filter SDK

- Case Study

- Video Editor SDK Release

- Beauty AR SDK

- Video Conferencing

- Web AR

- AR Makeup

- Face Tracking

- News

- Virtual Background

- Virtual try-on Releases

- Unity

- Success Stories

- Hair Recolor

- AR In Marketing

- featured

- Photo Booth

- Snapchat Technology

- Photo Editor SDK

Never miss a post

Get our best articles, how-to's and insights on AR app development

Let’s Get Started, It’s Free